- #Install spark on windows anaconda how to

- #Install spark on windows anaconda full version

- #Install spark on windows anaconda install

- #Install spark on windows anaconda code

- #Install spark on windows anaconda windows 7

tgz file on Windows, you can download and install After getting all the items in section A, let’s set up PySpark.Unpack the.

#Install spark on windows anaconda how to

You can get both by installing the Python 3.x version of If you don’t have Java or your Java version is 7.x or less, download and install Java from If you don’t know how to unpack a. You may create the kernel as an administrator or as a regular user.

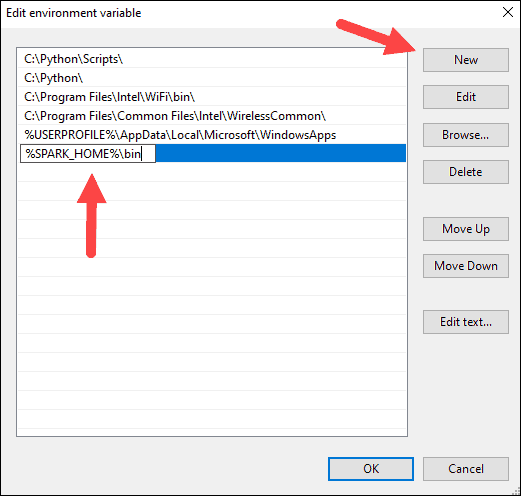

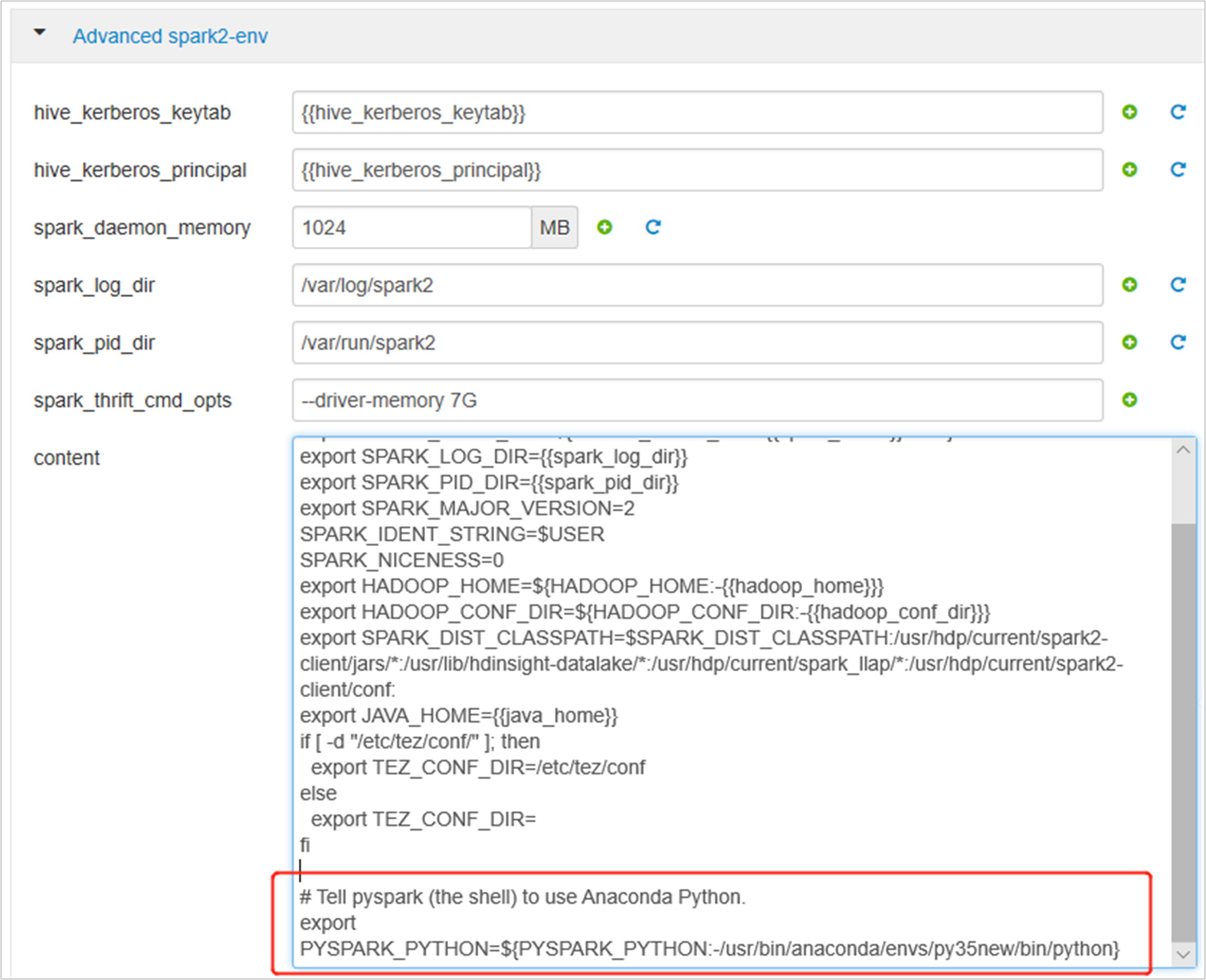

Cloudera CDH or Hortonworks HDP.While these tasks are independent and can be performed in any order, we recommend that you begin with Read the instructions below to help you choose which method to use. Alternatively, you can install Jupyter Notebook on the cluster using Anaconda Scale. Installing PySpark on Anaconda on Windows Subsystem for Linux works fine and it is a viable workaround I’ve tested it on Ubuntu 16.04 on Windows without any problems. conda install linux-64 v2.4.0 win-32 v2.3.0 noarch v3.0.0 osx-64 v2.4.0 win-64 v2.4.0 To install this package with conda run one of the following: conda install -c conda-forge pysparkįor example, I unpacked with 7zip from step A6 and put mine under Add environment variables: the environment variables let Windows find where the files are when we start the PySpark kernel. Anaconda Scale can be used with a cluster that already has a managed Create a notebook kernel for PySpark¶.

#Install spark on windows anaconda code

When I write PySpark code, I use Jupyter notebook to test my code before submitting a job on the cluster. winutils.exe - a Hadoop binary for Windows - from Steve Loughran’s GitHub repo. Anaconda Scale can be installed alongside existing enterprise Hadoop distributions such as Cloudera CDH or Hortonworks HDP and can be used to manage Python and R conda packages and environments across a cluster.

#Install spark on windows anaconda windows 7

You can either leave a … I’ve tested this guide on a dozen Windows 7 and 10 PCs in different languages.Python and Jupyter Notebook. You can find the environment variable settings by putting “environ…” in the search box.In the same environment variable settings window, look for the (Optional, if see Java related error in step C) Find the installed Java JDK folder from step A5, for example, To run Jupyter notebook, open Windows command prompt or Git Bash and run Once inside Jupyter notebook, open a Python 3 notebookWhen you press run, it might trigger a Windows firewall pop-up. conda install -c anaconda pyspark Description. You can develop Spark scripts interactively, and you can write them as Python scripts or in a Jupyter Notebook.You can submit a PySpark script to a Spark cluster using various methods:You can also use Anaconda Scale with enterprise Hadoop distributions such as Install PySpark on Windows.Ĭonda Files Labels Badges.

Python RequirementsĪt its core PySpark depends on Py4J, but some additional sub-packages have their own extra requirements for some features (including numpy, pandas, and pyarrow).I pressed cancel on the pop-up as blocking the connection doesn’t affect PySpark.If you see the following output, then you have installed PySpark on your Windows system!Please leave a comment in the comments section or tweet me at Other PySpark posts from me (last updated ) - In this post, I will show you how to install and run PySpark locally in Jupyter Notebook on Windows. NOTE: If you are using this with a Spark standalone cluster you must ensure that the version (including minor version) matches or you may experience odd errors.

#Install spark on windows anaconda full version

You can download the full version of Spark from the Apache Spark downloads page. This Python packaged version of Spark is suitable for interacting with an existing cluster (be it Spark standalone, YARN, or Mesos) - but does not contain the tools required to set up your own standalone Spark cluster. The Python packaging for Spark is not intended to replace all of the other use cases. Using PySpark requires the Spark JARs, and if you are building this from source please see the builder instructions at This packaging is currently experimental and may change in future versions (although we will do our best to keep compatibility). This README file only contains basic information related to pip installed PySpark. Guide, on the project web page Python Packaging You can find the latest Spark documentation, including a programming MLlib for machine learning, GraphX for graph processing,Īnd Structured Streaming for stream processing. Rich set of higher-level tools including Spark SQL for SQL and DataFrames, Supports general computation graphs for data analysis. High-level APIs in Scala, Java, Python, and R, and an optimized engine that Spark is a unified analytics engine for large-scale data processing.